Brendan O'Leary's previous blog posts

// This file contains a collection of my blog posts.

// This includes posts published on boleary.dev as well as external publications.

// Each blog post is separated by a line containing only '---'.

// This data is intended for use by Large Language Models (LLMs)

// to understand the topics, themes, and writing style of my blog content.

// Each blog post entry is in Markdown format

// Each entry should also contain a Title, Description, Date, and relevant Tags.

// External posts include Publication name and Original URL.

---

Title: Your AI Has Genie Energy (And That's a Problem)

Subtitle: Why prompting AI is less like programming and more like making wishes—with all the catastrophic literalness that implies

Description: AI coding assistants aren't malicious like genies, but they share the same fundamental problem: they respond to what you said, not what you meant. Here's how to make better wishes.

Date: 2/4/2026

Source: boleary.dev

Tags: AI, Tech

My daughter has been obsessed with Amelia Bedelia books lately. If you're not familiar, Amelia is a housekeeper who takes every instruction completely literally. When told to "draw the drapes," she sketches them. Asked to "dress the chicken," she puts clothes on it. Told to "put out the lights," she hangs them on the clothesline.

Reading these to my kids at bedtime, I keep thinking: *this is exactly what prompting an AI feels like.*

## The Genie Problem

There's an old thought experiment about genies that philosophers love. You find a lamp, rub it, and a genie appears offering three wishes. The catch? The genie will interpret your wish as literally and maliciously as possible.

Wish for "a million bucks"? Here's a million male deer trampling your house. Ask to "never feel cold again"? Your nerve endings stop working. Request "eternal life"? Enjoy outliving the heat death of the universe, conscious and alone.

The genie isn't stupid. It understands exactly what you *meant*. It just doesn't care. It fulfills the letter of your wish while ignoring the spirit entirely.

AI coding assistants aren't malicious like the genie. But they share the same fundamental problem: they respond to what you *said*, not what you *meant*.

## Amelia Bedelia, Senior Software Engineer

I asked Claude to "clean up this function." It deleted most of the code. Technically cleaner!

I asked it to "make this faster." It removed all the error handling. Much faster now—when it works.

I told it to "add some comments." It added a comment above every single line. `// increment i` above `i++`.

None of these responses were wrong. They were exactly what I asked for. The problem was that my prompts were vague enough that a helpful but literal-minded assistant could reasonably interpret them many ways.

The [Greptile State of AI Coding 2025 report](https://www.greptile.com/state-of-ai-coding-2025) found that developers using AI tools shipped 76% more code year over year. But raw output isn't the same as useful output. You can generate a lot of code quickly if you're willing to accept "technically correct but missing the point" as a passing grade.

## Context Is Everything (And You Probably Forgot to Provide It)

Amelia Bedelia isn't *wrong* when she draws the drapes. She's missing context that any reasonable person would have. The homeowner assumes shared understanding. Amelia assumes nothing beyond the literal words.

AI assistants are the same. They have zero context about:

- Your project's specific conventions

- Why you made the architectural decisions you made

- What "clean" or "fast" means in your codebase

- The parts of the codebase you haven't shown them

- What you'll regret tomorrow

[Liz Fong-Jones captured this well](https://bsky.app/profile/lizthegrey.com/post/3mb65fnjiis25): "In essence a language model changes you from a programmer who writes lines of code, to a programmer that manages the context the model has access to."

Managing context is the new core skill. Not managing the AI's feelings or convincing it to help you. Managing what it knows about your situation.

## Making Better Wishes

The secret to working with genies (and AI) isn't cleverness. It's precision.

Bad wish: "Make me rich."

Better wish: "Add $10 million in legally obtained US dollars to my existing bank account at Chase, account number XXXX, without triggering any regulatory flags, tax complications, or negative consequences to myself, my family, or anyone else."

The better version isn't just more specific—it anticipates failure modes and closes loopholes.

Same with prompts:

Bad prompt: "Refactor this function to be cleaner."

Better prompt: "Refactor this function to use early returns instead of nested conditionals. Keep all existing error handling. Don't change the function signature or return type. Match the code style in utils/helpers.ts."

The better prompt isn't longer for the sake of being longer. It specifies *what kind* of clean you mean, *what to preserve*, and *what to match*.

## Three Things That Actually Help

**Say what you mean, precisely.** If you want error handling preserved, say so. If you want the code style to match existing files, say which files. If you want tests, say what kind and how many. Ambiguity is an invitation for the genie to exercise creativity in ways you won't like.

**Specify what NOT to do.** Genies love loopholes. AI assistants love "helpful" additions you didn't ask for. "Don't add any new dependencies." "Don't change the API surface." "Don't refactor anything outside this function." Constraints are gifts.

**Give context before asking for anything.** Before you make your wish, tell the genie about your kingdom. Before you prompt for code, give the AI your conventions, your constraints, your codebase's quirks.

The [junior developer mental model](https://blog.kilo.ai/i/175061191/the-mental-model-that-works) works here too. You wouldn't ask a new hire to "make this better" without explaining what "better" means in your codebase. Don't do it to your AI either.

## The Amelia Bedelia Upside

Reading those books to my kids, I've noticed something. Amelia Bedelia usually saves the day in the end. She makes amazing pie, or her literal interpretation accidentally solves a problem no one else could.

AI assistants are similar. Sometimes the literal interpretation *is* what you need. Sometimes "add error handling to this function" produces exactly the error handling you would have written, just faster.

The skill isn't in preventing all misunderstandings. It's in recognizing them quickly and iterating. The feedback loop between wish and result is instant now. That matters more than getting it right the first time.

## Making Your First Wish

If I had to summarize everything I've learned about prompting:

*Your AI is Amelia Bedelia with a photographic memory and the energy of a thousand junior developers. It will do exactly what you say. So say exactly what you mean.*

Include context. Be specific. Anticipate misinterpretation. And when it inevitably draws the drapes instead of closing them, laugh, clarify, and try again.

The genie has infinite patience. Use it.

---

*Your kids probably understand Amelia Bedelia better than you understand your AI assistant. Maybe that's the real lesson here.*

---

Title: The Death of Morality

Subtitle: How America abandoned moral reasoning for tribal loyalty

Description: America hasn't become immoral—it's become post-moral. We've abandoned principled reasoning for pure tribal alignment, making moral positions contingent on who's doing what rather than what's being done.

Date: 9/12/2025

Source: boleary.dev

Tags: Personal

import Tooltip from "../../../../components/Tooltip.astro";

**America has become a post-moral society.**

Not immoral - that would imply we're violating principles we still believe in and have morality. Post-moral...we've abandoned moral reasoning itself.

## Tribe First, Principles Never

Here's what I mean by post-morality: **Your moral position is determined by checking tribal alignment first.**

Watch how this works in practice. When Elon Musk was the electric car guy fighting climate change, one tribe loved him. When he bought Twitter and started posting different opinions, that same tribe decided electric cars were actually problematic. The other tribe, which had mocked Tesla drivers as virtue-signaling liberals, suddenly discovered that EVs were pretty cool actually.

The product didn't change. The climate impact didn't change. Only the tribal alignment changed - and with it, everyone's "deeply held" convictions.

## The Motivated Reasoning Machine

The psychological research on this is damning. [Jonathan Haidt's work](https://www.penguinrandomhouse.com/books/73535/the-righteous-mind-by-jonathan-haidt/) shows [we don't reason our way to moral positions](https://pubmed.ncbi.nlm.nih.gov/11699120/) - we have gut reactions and then rationalize them. But we've gone beyond that. We now have entire media ecosystems dedicated to providing pre-packaged rationalizations for whatever position our tribe needs us to hold this week.

**The principle never mattered - only who was doing it.** Remember when deficit spending was the ultimate evil? Until your party held power. Executive orders were tyrannical overreach? Until your president signed them. Tech monopolies were dangerous? Until they banned your opponents. Revealing powerful men who exploited women? Only if they voted wrong. Political violence was abhorrent? Unless it was your protesters burning the federal building. The grocery prices, the farmers, the gas prices - urgent crises that mysteriously vanished the moment the election ended.

**This isn't just hypocrisy**. *Hypocrisy* implies you still believe in the underlying principle. This is the complete abandonment of principles in favor of pure tribal loyalty.

## How We Rationalize Anything

We've gotten incredibly sophisticated at justifying obviously contradictory positions. We don't just flip-flop anymore - we develop elaborate theoretical frameworks for why the thing we opposed yesterday is actually good today.

Watch how quickly "free speech is not freedom from consequences" becomes "censorship is violence" depending on who's being censored. See how "lock them up" becomes "due process matters" depending on who's accused. Notice how "states' rights" becomes "federal override" depending on which policy we're discussing.

We've built an entire intellectual infrastructure designed to make our tribalism look principled. Academic theories, think pieces, and lengthy Twitter threads all devoted to explaining why our side's violence is speech but their side's speech is violence.

## The Cost: Moral Bankruptcy

When everything is justified by tribal loyalty, actual moral reasoning atrophies. We lose the ability to say "my side is wrong about this"...or even "this person who happens to also be on my side is wrong about this"...because that's tribal betrayal. We can't acknowledge good ideas from the other side because that's giving aid to the enemy.

It is so disturbing is watching the emotional reactions from friends whose political views I know well. When violence occurs, when someone is wronged, their response is entirely based on who did what to whom. Silence when the perpetrator is from their tribe. Outrage and dangerous rhetoric when the victim is one of their own. The wrongdoing **itself** becomes secondary to the tribal affiliation of those involved. Just another chance at a "gotcha" for the other side - actual humanity and empathy be damned! Actually, let me use some of that "empathy" to make the other side hurt more....

This moral bankruptcy isn't confined to social media or dinner table arguments - it happens at the highest levels of government. Leaders don't even pretend to be acting morally anymore, directly doing the opposite and being praised for it. And it's self-reinforcing: when we don't hold our leaders to any moral standard while still *claiming the moral high ground*, we teach everyone that principles are just weapons to use against the other side, not standards to live by.

## The Way Forward (If There Is One)

How do you restart moral reasoning in a culture that's abandoned it? How do you get people to examine principles when tribal loyalty is the only principle left?

As Jim VandeHei wrote after the Charlie Kirk killing, we need to ["break the fever. Or it might break us."](https://www.axios.com/2025/09/12/charlie-kirk-political-violence-america) The path forward isn't through more tribalism or better arguments for our side. It's through individual actions that chip away at this toxic dynamic.

- **Stay grounded.** The most extreme voices get amplified *because* they're extreme, not because they're representative.

- **Tone it down.** Stop dunking on bad takes. *Your outrage feeds the algorithm*, destroying our ability to think.

- **Turn it off.** During breaking news, step away. *The misinformation-to-truth ratio is staggering*.

- **Open up.** Ask questions from *curiosity*, not *judgment*. People are more complex than their tribe.

- **Practice principle.** Call out your side when they're wrong. Credit the other side when they're right. These small tribal betrayals rebuild moral reasoning.

The question isn't whether you engage in tribal thinking - you do. The question is whether you can occasionally step outside it and ask: would I support this if the other tribe did it? Would I oppose this if my tribe did it? Can I name a principle I'd maintain even if it cost my tribe power?

If you can't, then you're not engaged in moral reasoning. You're just playing team sports with higher stakes.

**The test is simple:** Would you support this if the other tribe did it? If not, you're not doing moral reasoning - you're playing team sports with democracy as the stakes.

---

Title: Hurry up and wait

Subtitle: The timeless struggle with time: A reflection on parenthood and mortality

Description: Parenthood and mortality are two of the most profound struggles humans face. The passage of time, both in the daily grind and the long-term perspective, is a constant reminder of our finite existence. This blog explores how we grapple with time, from the daily challenges of parenting to the anticipation of future events.

Date: 9/6/2025

Source: boleary.dev

Tags: Personal

One of the worst things that I think you can say to a new parent is "It goes by so fast, enjoy it!" When you have a newborn baby, time is one of those human constructs that goes out the window first. You're in survival mode—for both you and the baby.

In fact, it's babies that most convince me that time is one of the things that human consciousness grapples to understand the most, yet understands the least. Sure, it is natural for us to go through the days and weeks in cycles—sleeping at night and having a routine in our days. But no one tells a newborn that. I have four kids, and I'm fairly confident that with each of them in the first few months of life, I took them to the window in the wee hours of the night, pointed outside, and said, "Look. It's nighttime. This is when you're supposed to be sleeping," only to be met with a blank stare. Or maybe some questionable intestinal sounds.

And that is why it can be especially cruel to point out to new parents, who are just trying to survive hour by hour, minute by minute, on little-to-no-sleep, clothing of questionable cleanliness, and certainly no personal hygiene routine, that they will "miss this one day." Perhaps they will, but even if you're right, now is not the time. When you're fighting for survival, reminders of time aren't helpful. In fact, that can hurt your ability to see what's right in front of you.

There's another phrase people say when talking about parenthood that I also think applies more broadly to the human desire and failure to grapple with time. "The days are long, but the years are short." This at least acknowledges the fact that days can be hard. Making meals, dealing with tantrums, band-aiding cut knees. The daily life of families makes for long days, ending up (often) in exhausted parents who are in bed minutes after the children are. And yes, as the kids grow and go off to school, play sports, spend more time with friends than at home, you can be nostalgic for the time they were more dependent on you for their every need. But two things can be true: that nostalgia and the fact that it is hard, _damn hard_, to have another human being rely on you that way.

Those two clichés—"It goes by so fast" and "The days are long but the years are short"—aren't the only clichés about time. In fact, humans have been grappling with the passage of time for so long that I think it may account for some of the oldest clichés in the book. It's challenging to pin down why time is such an obsession of the human mind. Perhaps it is what comes with a consciousness that is capable of understanding its own mortality. Knowing that a resource is finite causes any animal—human or not—to hoard and obsess over it.

With the consciousness of time comes another feeling. One that, for someone like me who is prone to a significant amount of anxiety, can be more devastating still. Anticipation. And yet another time cliché comes to mind whenever I think of that anticipation: hurry up and wait. Well...I can't wait.

It's difficult to decide what is worse to wait for—something good or something bad. In your adult life, you find many of both. A long-awaited vacation. Results of a biopsy. Visits from friends. The next shoe to drop at work. A big (good) milestone for your child. A big (bad) milestone for your child.

I find that all of these things can be complicated to handle as a creature acutely aware that the time we have to wait for them is, in the end, actually all we have. The pressure to both enjoy the time we have and plan for the future we know is inevitable can be daunting. This can be especially hard when dealing with grief and loss—or anticipating grief and loss. Because what, after all, are we worrying about losing when someone is sick or waiting for news from a doctor? It is time.

The thing that we share with each other. The thing that bonds us to one another. The thing that we only have so much of is - time. Time waits for no one. Time is of the essence. Time is money. Time flies when you're having fun. Time marches on. Only time will tell. Time is running out. Make time. Kill time.

There's no time like the present? The only time we have **is the present**.

And that is perhaps the most disturbing part of it all. We only have the present. Past and future are concepts, but only that. The only time we actually have is right now. That's what makes having a baby _and_ sending that child to school hard. That's what makes the daily routine _and_ anticipating the future hard. But it's also all we have, so we better make the most of it. While we still have the time.

---

Title: The 10 Year Old Trip: One Kid, One Parent, One Perfect Tradition

Subtitle: How we accidentally discovered the best parenting hack for large families

Description: When you have four kids, individual attention becomes a rare commodity. The '10 year old trip' tradition gives each child their moment to be the only decision-maker while creating memories that last a lifetime.

Date: 8/23/2025

Source: boleary.dev

Tags: Personal, Personal

import Callout from "../../../../components/Callout.astro";

I always say we have **too many kids**. Four kids, to be exact. It's somewhat in jest, but also... it's true. When you're trying to get a family of six moving anywhere, "vacation" becomes a relative term. We go to the beach, sure, but let's be honest—it's not a vacation for my wife and me. It's a trip. The kids have a blast, but for us? It's a lot of work.

That's why when my wife stumbled across another large family's [instagram posts](https://www.instagram.com/kathrynwhitakertx/) about their "10 year old trip" tradition, it immediately resonated. Here was a family that understood the chaos, the constant compromises, the reality that individual attention becomes a rare commodity when you're outnumbered.

## The Tradition

The concept is simple: when each child turns 10, they get to pick one parent and one destination (within reason, in the continental United States) for a solo trip. Just them, one parent, and complete decision-making power.

Think about it—when do kids in large families ever get to make *all* the decisions? Usually, it's a negotiation between siblings, a compromise that leaves everyone partially satisfied but no one completely happy. Not on the 10 year old trip. This is their show.

## Our Adventures So Far

### Trip One: Legoland and Beyond

Our oldest son chose me and Legoland in Florida. Right off the bat, he was making the big calls. When I explained that staying on the Legoland resort property was expensive, but if we stayed off-property we could add a day at Harry Potter World with the money we saved, he didn't hesitate. "Let's do Harry Potter World too!"

The best part? I surprised him with a "last day before the plane home" trip to Cape Canaveral. He's obsessed with space and NASA, so watching his face light up at Kennedy Space Center was worth the extra driving. When you only have one kid to focus on, you can actually see these moments instead of managing three other meltdowns.

### Trip Two: Disney Magic

My daughter, two years younger, chose my wife and—in what I had expected would be the template for most 10 year old trips—Disney World. But here's where the tradition surprised us again.

Turns out, she's absolutely fearless on roller coasters. With just one kid to focus on, my wife could actually enjoy watching her daughter's pure joy instead of worrying about where the other three were or who needed what. They rode everything, multiple times, without the usual "but I don't want to" chorus from siblings.

### Trip Three: The Great Outdoors

This year, I'm heading to Yellowstone with our third. After two theme park adventures, we're going completely different—national parks, hiking, wildlife. And it makes sense again in retrospect given our son's break from his siblings. I'm sure he'd love Disney World, but being a little more "introverted and skinned knees from climing trees" then his older siblings, this just fits. It's going to be fascinating to see how this plays out compared to the structured entertainment of the previous trips.

## The Real Magic

Here's what I've learned: when you're used to managing four kids, having just one is incredibly freeing. (my wife and I have a saying "one is the new zero") You can actually have conversations. You can be spontaneous. You can say "yes" to things you'd normally have to say "no" to because of logistics.

When we travel with all four kids, I don't call it a vacation. It's a trip. A vacation for the kids, but work for us parents. The 10 year old trip? That's _actually_ a vacation.

But beyond the logistics, there's something deeper happening. These kids get undivided attention in a way that's nearly impossible in daily life with a household of six. They get to be heard, to have their preferences matter completely, to experience what it feels like to have to and get to make all the decisions for a few days.

## Why Structure Matters

This tradition works because it gives kids something concrete to look forward to. Structure and tradition aren't just nice-to-haves in large families—they're survival tools. The more predictable rhythms you can build into their lives (daily, weekly, etc.), the better they handle the chaos that inevitably comes with having siblings.

It's the same principle they tell you about with babies: structure helps them understand and adjust to the rhythms of life. But here's the thing—every kid is different. Some take to structure naturally, others push against it. The 10 year old trip works because it honors both needs: the structure of a family tradition and the individual exploration of personal choice.

## The Bigger Picture

What started as a practical solution to the "too many kids" problem has become something much more meaningful. Each child knows their turn is coming. They watch their older siblings go and start planning their own adventure. They learn that being part of a big family doesn't mean getting lost in the crowd—it means your individual moment will come, and when it does, it'll be completely yours.

The tradition also teaches them about decision-making in a low-stakes environment. Want to spend the whole day at one attraction? Your call. Prefer to hop around? Also your call. These are the kinds of choices they rarely get to make unilaterally at home.

## Looking Forward

As we gear up for Yellowstone, I'm curious how this outdoor adventure will compare to the theme park experiences. Will the lack of structured entertainment make the one-on-one time even more meaningful? Will hiking trails lead to deeper conversations than roller coaster lines?

What I know for sure is that this tradition has become one of our family's most treasured practices. It acknowledges the reality of large family life while creating space for individual connection. It gives each child something uniquely theirs in a household where sharing is the default.

And honestly? It gives us parents a chance to remember why we love spending time with our kids in the first place, without the constant referee duties that come with the full crew.

The 10 year old trip isn't just about the destination—it's about the rare gift of undivided attention in a divided world. For families like ours, that might be the most valuable vacation of all.

---

Title: Grace Hopper Would Love AI Coding

Subtitle: From machine code to natural language: Admiral Hopper's legacy lives on.

Description: Admiral Grace Hopper spent her career fighting the assumption that humans had to adapt to machines, insisting machines should understand us. Today's AI coding assistants are exactly what Amazing Grace predicted.

Date: 8/19/2025

Source: boleary.dev

Tags: AI, Tech, History, Tech

Admiral [Grace Hopper](https://en.wikipedia.org/wiki/Grace_Hopper) carried wire pieces [in her purse](https://www.youtube.com/watch?v=si9iqF5uTFk) - 11.8 inches for a nanosecond, nearly 1,000 feet for a microsecond. These weren't random lengths. They represented the maximum distance electricity could travel in those tiny time fractions.

She'd hand them to confused engineers during meetings, watching their faces change as the abstract became tangible.

*"I sometimes think we ought to hang one over every programmer's desk so they'll know exactly what they're throwing away when they throw away a microsecond."*

Would Admiral Hopper have used a Mac? Or Arch Linux btw?

But Hopper's real genius wasn't making time visible - **it was making [computers speak human](https://www.youtube.com/watch?v=1LR6NPpFxw4)**. She spent her career fighting the assumption that humans had to adapt to machines, insisting machines should understand us.

I think about those wires and Admiral Hopper when I'm prompting Claude in plain English and watching code appear. We're living through exactly what Amazing Grace predicted.

## Beyond the Model T

In 1983, when Morley Safer suggested the computer revolution was over, Hopper [disagreed](https://www.youtube.com/watch?v=1LR6NPpFxw4): *"We're only at the beginning. We've got the [Model T](https://en.wikipedia.org/wiki/Ford_Model_T). That's where we are now."*

She was right. If 1983 was the Model T, today's AI coding assistants are self-driving cars. We've gone from her [Mark I](https://en.wikipedia.org/wiki/Harvard_Mark_I) computer doing three calculations per second to language models processing 100,000 tokens per second while understanding natural language.

But again, the real revolution wasn't about speed—it was about making computers accessible to human thinking.

## The Vision She Fought For

When Hopper proposed English-like commands in the 1950s, colleagues said it was impossible. *"I was told very quickly that I couldn't do this because computers didn't understand English."*

But she had a different vision. She saw two kinds of users: *"people who liked using symbols—mathematicians and people like that"* and *"people who were in data processing who hated symbols, and wanted words."*

Her breakthrough came with [FLOW-MATIC](https://en.wikipedia.org/wiki/FLOW-MATIC) in 1955—the first programming language using English-like statements. Commands replaced cryptic symbols, and FLOW-MATIC can be understood even by today's programmers who may have never seen it before:

```

COMPARE PRODUCT-NO (A) WITH PRODUCT-NO (B) ;

IF GREATER GO TO OPERATION 10 ;

IF EQUAL GO TO OPERATION 5 ; OTHERWISE GO TO OPERATION 2 .

```

But like all great languages, FLOW-MATIC's legacy lives on in something even bigger. And that would be [COBOL](https://en.wikipedia.org/wiki/COBOL), introduced in 1959 as the Common Business-Oriented Language. Programming finally looked less like advanced mathematics and more like structured English.

## Programming in Plain English

Hopper kept pushing for actual English sentences that computers could execute. Today, I write to GPT-5: *"Build me a React component that displays a user's profile with their avatar, name, and bio. Make it responsive and include a follow button."* And it works.

This isn't sophisticated autocomplete. These models understand context, intent, and implied requirements—exactly what Hopper envisioned.

The progression is clear:

- Machine code and punch cards (1940s-1950s)

- Assembly language and early compilers (Hopper's era)

- High-level languages like COBOL (1960s-1970s)

- Personal computers, BASIC and the like (Hopper's "Model T" era)

- Modern specialized high- and low- programming languages (1990s-2000s)

- Natural language programming with AI (today)

We've reached Hopper's ultimate goal: the primary interface between humans and computers is natural language itself.

## Making the Magic Concrete

Just like Hopper with her wires, let's make LLMs less of a black box.

Think of tokens as LEGO bricks. Each token represents roughly three-quarters of a word. When you prompt an LLM, you're giving an impossibly fast robot LEGO bricks and asking it to build something meaningful.

That robot sees up to 128,000 bricks at once—that's roughly 400-500 pages of text or about 25,000 lines of code. It processes 50-120 bricks per second depending on the model.

Put that in perspective: A fast typist at 100 words per minute produces about 133 tokens (LEGO bricks) per minute, or about 2.2 per second. Modern LLMs like Claude Sonnet 4 (50-60 TPS) or Gemini Flash (~100 TPS) process 25-50 times faster than a speed typist.

In one microsecond—the time electricity travels Hopper's 984-foot wire—the LLM processes about 0.05-0.1 tokens. A human typist needs 30-60 seconds to produce what the AI generates in one second.

In one full second? 50-120 tokens of coherent response. That's what would take a speed typist 25-60 seconds to type, but the AI is simultaneously understanding context, following instructions, and generating syntactically correct code.

This isn't magic. It's very fast pattern matching at incredible scale, constrained by the same physical laws Hopper's wires represented.

## Time to Pay Attention

Admiral Hopper would agree: software engineers need to pay attention.

Too many developers make the same mistake Hopper's contemporaries made—dismissing fundamental shifts because they don't fit existing mental models. They see AI coding as "just autocomplete" or "vibe coding that won't last." Even worse, they say Hopper's most dangerous phrase: *"We've always done it that way."*

Hopper also understood: when you make computers more accessible to human thinking, you don't replace creativity—you amplify it.

Engineers who learned COBOL didn't become obsolete when it replaced machine code. They became more productive. The same thing is happening now, faster and more dramatically. Hopper also kept a counterclockwise clock in her office to remind people there was never any fundamental reason clocks had to run clockwise—just what people were used to.

There's no fundamental reason programming has to be about memorizing syntax and fighting compilers. That was just what we were used to.

## What Would She Have Thought?

I imagine Hopper pulling out LEGO bricks instead of wires. *"This is a token. Now imagine a machine that understands your entire blueprint and builds whatever you describe, as fast as electricity travels down this wire."* And then pull out the LEGO Eiffel Tower. At 10,001 pieces, it would take an LLM just a minute or two to assemble. It takes humans about [15-20 hours](https://time2brick.com/?p=48). Thankfully, Chris Adams [time-lapsed his 14 hour build](https://www.youtube.com/watch?v=m6XN5GQ4BPg) to show it at LLM speed.

The parallels run deeper still:

- **Then**: COBOL made programming readable to business people

**Now**: LLMs make programming accessible to anyone who can describe what they want

- **Then**: She created the first compiler to translate human-readable code

**Now**: We have AI translating natural language directly into code

- **Then**: She fought "we've always done it that way" thinking

**Now**: We need to challenge assumptions about what programming looks like

This isn't just about making programming easier—it's about democratizing computation itself.

Just as COBOL broke programming out of mathematicians' exclusive realm, AI is breaking it out of professional developers' exclusive realm. Product managers build prototypes. Teachers create educational tools. Kids make games by describing them.

## The Wire Forward

Hopper's nanosecond wire made the abstract concrete so people could reason with it and transcend it. We need to do the same with AI coding tools.

Stop treating them as magical black boxes. Understand them as very fast pattern matchers working with discrete meaning units at incredible scale.

Grace Hopper proved human-computer relationships didn't have to be adversarial—they could be collaborative. She laid the groundwork for everything we're experiencing now.

> Don't let yourself say "we've always done it that way."

I wish she could have seen Claude write COBOL from casual English. [Here's a program](https://gitlab.com/-/snippets/4880775) that prints the scale of a nanosecond, microsecond, and second - made from the prompt "Can you write me a COBOL program that prints out a scaled length of a nanosecond and a microsecond in the output?". Hopper would have smiled, pulled out a wire, and repeated what she told Safer: *"We're only at the beginning."*

To developers dismissing AI coding as a fad, I imagine her adding, *"Pay attention. We've moved beyond the Model T I talked about in 1983. This is what I've been telling you would happen for sixty years."*

**The future is human-friendly computing.**

Admiral Hopper would be proud—and she'd want you to [get on board](https://kilocode.ai/).

Originally published here: https://blog.kilocode.ai/p/grace-hopper-would-love-ai-coding

---

Title: When Republics Fail

Description: Examining historical patterns of democratic collapse and how the U.S. Constitution was deliberately designed with checks and balances to prevent executive overreach, legislative inaction, and public acquiescence that doomed republics like Weimar Germany and early Republican China.

Date: 3/20/2025

Source: boleary.dev

Tags: History

import Callout from "../../../../components/Callout.astro";

History offers sobering examples of republics that collapsed into authoritarian regimes. In each case we see common patterns. This includes very well known falls like the [Weimar Republic's fall into Nazi Germany](https://en.wikipedia.org/wiki/Weimar_Republic), lesser known instances like the [early Republic of China (1912-1916)](https://en.wikipedia.org/wiki/Republic_of_China_(1912%E2%80%931949)) under Yuan Shikaiπ, and many older attempts at permeant republican societies.

What does it look like? Ambitious leaders exploit crises to **overreach their executive power**. Lawmaking bodies **fail to check** these abuses in a meaningful way. And then ordinary people **acquiesced**, sometimes even welcoming strongman rule during chaos.

Even though many of the examples of these failures we may think of occurred well after the Revolutionary War in the United States, the founders were keenly aware of these failure modes. They designed the U.S. Constitution with deliberate checks and balances to **prevent the fate of past republics**, drawing on lessons from history - and documenting many of those key insights in the [Federalist Papers](https://guides.loc.gov/federalist-papers/full-text).

I wanted to take a closer look at this, and writing this blog became my excuse to do so. And I want to focus on those three steps that I mentioned: executive overreact, legislative inaction, and public acquiescence - and then think about how the United States is specifically designed to attempt to prevent the types of republican failures we've seen in the past.

**Table of Contents**

- [Executive Overreach: When Leaders Seize Too Much Power](#executive-overreach-when-leaders-seize-too-much-power)

- [American safeguards against executive tyranny](#american-safeguards-against-executive-tyranny)

- [Legislative Inaction: When Parliaments Fail to Check Tyranny](#legislative-inaction-when-parliaments-fail-to-check-tyranny)

- [American safeguards in the legislature](#american-safeguards-in-the-legislature)

- [Public Acquiescence: When Citizens Surrender Liberty for Security](#public-acquiescence-when-citizens-surrender-liberty-for-security)

- [American perspective on public vigilance](#american-perspective-on-public-vigilance)

- [How the U.S. System Was Designed to Endure](#how-the-us-system-was-designed-to-endure)

- [Lessons for today](#lessons-for-today)

## Executive Overreach: When Leaders Seize Too Much Power

A republic entrusts elected leaders with limited powers, not unchecked authority. **Executive overreach** occurs when a leader subverts these limits and accumulates power that no single branch should hold. The Founding Fathers warned that concentrating all government powers in one person or body is tyranny: “The accumulation of all powers, legislative, executive, and judiciary, in the same hands...may justly be pronounced the very definition of tyranny,” James Madison wrote in [Federalist No. 47](https://teachingamericanhistory.org/document/federalist-47-federalist-48-and-federalist-51/). To prevent this, the U.S. Constitution creates separate branches and distributes authority - making them "co-equal branches" of the federal government.

As [Federalist No. 51](https://avalon.law.yale.edu/18th_century/fed51.asp) explains:

> Ambition must be made to counteract ambition”

Each branch must have the means and motive to resist encroachments by the others . If _"men were angels, no government would be necessary,"_ but since they are not, the government must be obliged _"to control itself"_ through internal checks and balances.

So if that is true, how have we seen in history Executive overreach lead to the breaking down of these checks and balances?

**Nazi Germany (Weimar Republic's collapse):** In the early 1930s, Germany's Weimar Republic was a democracy on paper, but economic depression and political deadlock created an opening for executive abuse. After Adolf Hitler was appointed chancellor in January 1933, he moved swiftly to dismantle checks on his power.

Exploiting emergency provisions, Hitler persuaded President Hindenburg to issue the Reichstag Fire Decree, suspending civil liberties. He then pushed through the [**Enabling Act of 1933**](https://en.wikipedia.org/wiki/Enabling_Act_of_1933), a law that gave Hitler's cabinet the power to enact laws without the Reichstag (parliament) or presidential approval. This Act effectively let Hitler bypass the constitution's checks and rule by decree, laying the legal foundation for his dictatorship.

By combining the offices of President and Chancellor after Hindenburg's death, Hitler completed his power grab and proclaimed himself _Führer_, wielding absolute authority. In short, Hitler **manipulated the democratic system to destroy democracy and create a dictatorship**. The Weimar Republic's fate shows how quickly an executive can accrue near-absolute power in a crisis, especially if constitutional safeguards are weak or ignored.

**China's First Republic:** The [**early Republic of China**](https://en.wikipedia.org/wiki/Republic_of_China_(1912%E2%80%931949)) offers a lesser-known but vivid example of executive overreach. After the 1911 revolution ended imperial rule, a constitutional republic was proclaimed in 1912. General **Yuan Shikai** soon became president, and rather than uphold republican institutions, he systematically undermined them.

In 1913, when the new National Assembly and elected parliament asserted themselves, Yuan resorted to violence and coercion. He allegedly ordered the assassination of Song Jiaoren, the leader of the majority [Kuomintang](https://en.wikipedia.org/wiki/Kuomintang) (Nationalist) Party, who was a pro-democracy advocate. Yuan then banned the Kuomintang, purged hundreds of opposition lawmakers, and ultimately dissolved Parliament outright in 1914.

Ruling by decree and backed by the Beiyang Army, Yuan stripped away the checks on his authority and even proclaimed himself Emperor for life in 1915. This was the ultimate act of overreach: **a president of a republic crowning himself monarch**. Yuan's power grab quickly eroded China's first experiment in republican governance. As one historian noted, **“China's first attempt at a democratic transition utterly failed”** once Yuan dismantled the nascent constitutional system ( [A Forgotten Experiment | British Journal of Chinese Studies](https://bjocs.site/index.php/bjocs/article/view/54) ). Within a few years, the republic had collapsed into warlordism - a direct outcome of one man concentrating all power in his own hands.

### American safeguards against executive tyranny

The framers of the U.S. Constitution, having _just_ defeated monarchical rule, created a presidency with significant but strictly limited powers. Presidential authority is systematically checked by Congress and the Judiciary at every turn.

Presidents cannot unilaterally create or modify laws—all legislation requires Congressional approval. Executive orders and emergency powers remain subject to judicial review and congressional limitation. Congress controls funding, conducts oversight, and can impeach presidents who exceed legal boundaries. This structure embodies Madison's principle that liberty requires no branch exercise another's powers. Unlike in failed republics, American presidents cannot dissolve legislatures or rewrite the Constitution, even during crises. Washington himself warned in his [Farewell Address](https://avalon.law.yale.edu/18th_century/washing.asp):

> the spirit of encroachment tends to consolidate the powers of all departments in one, and thus create... a real despotism.

This design ensures that any president attempting authoritarian measures would face immediate resistance from Congress, courts, states, media, and voters. While presidential overreach remains possible, the constitutional safeguards established by the Founders create substantially higher barriers against tyranny.

By design, an American executive who attempted Yuan Shikai's or Hitler's path would quickly run up against Congress, the courts, the states, a free press, and ultimately the voters. This doesn't mean a president _could never_ abuse power, but the **hurdles are far higher** because of the constitutional circuit breakers installed by the Founders.

## Legislative Inaction: When Parliaments Fail to Check Tyranny

In a healthy republic, the legislature is meant to be a robust check on the executive. If the legislative branch becomes ineffective, cowardly, or complicit, an aspiring autocrat faces little resistance in taking control. The Founders understood that tyranny can arise not only from an over-mighty executive, but also from a **failure of the legislature to assert its authority**. As Madison observed, tyranny can spring from “the intrigues of the executive” if not adequately checked by a representative assembly. In [Federalist No. 51](https://avalon.law.yale.edu/18th_century/fed51.asp), he argued each branch must have the will and means to defend its own powers, making **“the legislative authority … to be divided”** and balanced so it cannot be easily usurped.

The U.S. Constitution thus vests Congress with extensive powers and internal checks (two houses with different constituencies and terms) to ensure energy and independence in the legislative branch. These measures were intended to prevent legislative paralysis or surrender in the face of executive ambition.

**Nazi Germany:** The demise of Germany's democratic legislature in the 1930s is a cautionary tale of legislative failure. The Weimar Republic's Reichstag was crippled by political infighting and economic paralysis, enabling Hindenburg to govern through emergency decrees, bypassing parliament.

By 1933, the Reichstag had already surrendered much of its authority. When Hitler proposed the Enabling Act to abolish parliamentary oversight, legislators capitulated without meaningful resistance. Centrist and right-wing parties voted to transfer full legislative powers to Hitler's cabinet for four years.

Though intimidation factored in—SS troops surrounded the building and opposition members were silenced—elected officials legally dissolved their own authority. This self-neutering removed the final check on Hitler's power. The Reichstag became merely ceremonial while Hitler's inner circle controlled lawmaking, a decisive step in democracy's collapse.

This underscores how a legislature's **weakness or indecision** can enable authoritarianism just as surely as an ambitious executive can.

**Early Republic of China:** In China's short-lived 1912-1916 republic, parliament failed to prevent autocracy due to Yuan Shikai's direct suppression.

Following the 1913 elections, the Chinese Parliament was tasked with drafting a constitution to limit presidential power. Yuan preemptively crushed this threat by forcefully dismantling the legislature. When lawmakers demonstrated independence, Yuan expelled opposition members en masse, stripping all 438 Kuomintang representatives of their seats and eliminating the majority party.

Without a quorum, parliament stalled, and by January 1914, Yuan dissolved it entirely, ending constitutional development. Unlike in Weimar Germany, Chinese legislators actively resisted—some even launching the "Second Revolution" uprising - but Yuan's military quickly crushed this opposition. The Chinese Parliament's fate demonstrates that constitutions are only as strong as the officials willing to enforce them; when legislators lack institutional strength to check executive overreach, written protections prove inadequate.

### American safeguards in the legislature

The U.S. Founders prioritized legislative strength, recognizing Congress as the people's true representative. They established it as co-equal - and initially even considering it preeminent - and equipped it with powerful checks against executive overreach.

Congress alone can declare war, control spending, and confirm appointments. Its bicameral structure prevents hasty legislation and resists factional domination. Madison's principle that "ambition must counteract ambition" ensures each branch defends its authority when others encroach.

We've seen this system be effective before in American history:

* Truman's steel mill seizure was blocked by the Supreme Court in [Youngstown (1952)](https://en.wikipedia.org/wiki/Youngstown_Sheet_%26_Tube_Co._v._Sawyer).

* [Andrew Johnson's impeachment in 1868](https://en.wikipedia.org/wiki/Impeachment_of_Andrew_Johnson) - the first use of this Congressional power - checked his violation of the Tenure of Office Act

* Nixon's impoundment of funds prompted Congress to pass the [Budget and Impoundment Control Act (1974)](https://en.wikipedia.org/wiki/Congressional_Budget_and_Impoundment_Control_Act_of_1974) and assert Congressional control over the purse

While this framework has generally succeeded, it depends on legislators actively using their powers. The Constitution provides tools but requires representatives with the courage to uphold their oaths regardless of party loyalty. This is a responsibility modern Americans must demand of their Congress.

When that equilibrium fails, history shows **democracy itself** is in peril.

## Public Acquiescence: When Citizens Surrender Liberty for Security

The final (and perhaps most crucial) factor in a republic's collapse is the role of the people themselves. Even the best-designed institutions can falter if the public either actively supports an authoritarian turn or passively allows it. **Public acquiescence** can take the form of voters electing anti-democratic leaders, or simply a population that - out of fear or despair - doesn't resist the erosion of their freedoms.

The Founders recognized that no constitutional safeguards can save liberty if the people do not value and defend it. Ultimately, as Madison noted, _"a dependence on the people is, no doubt, the primary control on the government"_. At the same time, the Framers understood that in times of crisis, people might trade liberty for security. This is why they created **"auxiliary precautions"** - checks and balances, independent courts, federalism with powers resrved to the states, etc. - to secure rights even when popular sentiment wavers.

Still, a republic's fate rests on its citizens' vigilance. When Benjamin Franklin was asked about the Constitutional Convention's result, he famously replied they had created _"a Republic, if you can keep it."_ This proviso underscores that public commitment is the ultimate safeguard. Franklin warned the government could:

> only end in despotism…when the **people shall become so corrupted as to need despotic government**.

If citizens grow indifferent or excessively partisan, or prefer a strongman's false comfort, no paper constitution will save the republic.

**Nazi Germany:** Weimar democracy's collapse required both Hitler's ambition and public acceptance of authoritarian rule.

Though the Nazis used terror against opponents, they also enjoyed genuine popularity. Many Germans willingly exchanged democratic freedoms for promises of economic recovery and national restoration. Millions, exhausted by unemployment and political dysfunction, embraced extremist parties.

Hitler explicitly promised both economic revival and "strong authoritarian government" - with many voters willing to sacrifice democracy for perceived strength and stability. Once in power, Nazi propaganda reinforced compliance by portraying Hitler's leadership as superior to democratic division. When Hitler implemented radical measures (e.g. [Night of the Long Knives](https://en.wikipedia.org/wiki/Night_of_the_Long_Knives) or the [Nuremberg Laws](https://encyclopedia.ushmm.org/content/en/article/the-nuremberg-race-laws)), public resistance was minimal.

By the time the true horrors of the Nazi regime became apparent, it was too late to effectively oppose it. Weimar's fall demonstrates how **democratic norms quickly erode when public faith falters**. Economic crisis and national resentment created demand for a "strong hand" that the Nazis exploited. Hitler succeeded not merely through force, but through a population that initially accepted democracy's dismantling from a mixture of hope, fear, and indifference.

**Early Republic of China:** In China's case, public opinion is harder to gauge given lower literacy and limited mass politics, but evidence shows many Chinese elites and citizens accepted Yuan Shikai's authoritarian turn, fearing continued instability.

Yuan's 1913-1914 crackdown faced resistance from Sun Yat-sen's supporters, but he also received significant backing from influential figures. The Progressive Party sided with Yuan against the Kuomintang, while reformers like Liang Qichao defended his harsh measures, calling rebels "more disastrous than deluge and wild animals"—prioritizing order over democracy. Tang Hualong even advocated "immediate extermination" of Yuan's opponents, revealing that even educated elites accepted strongman rule as necessary stabilization.

The general public, particularly in northern provinces under Yuan's military control, failed to defend parliament. After decades of imperial decline and civil conflict, many Chinese desired unity and strong leadership at the cost of republican principles. Only when Yuan declared himself Emperor did he lose support from provincial governors and military leaders. By then, the republic was effectively finished, collapsing into warlordism after Yuan's death. China's experience demonstrates how public acquiescence often stems from exhaustion—people may accept authoritarianism when they fear democratic instability more than dictatorial control. Without established democratic traditions, following a strongman became the path of least resistance.

### American perspective on public vigilance

The United States was founded on a paradox: profound _distrust_ of concentrated power, yet great _trust_ in the ordinary citizen. The Founders believed an informed citizenry forms the foundation of a lasting republic.

Recognizing human fallibility, they designed a system encouraging participation while tempering sudden passions. Regular elections ensure accountability, while staggered terms and independent institutions **prevent momentary majorities from permanently upending the system**. Hamilton recognized that popular majorities can make mistakes, so the Constitution filters public impulses without thwarting public interest. Madison argued in Federalist 63 that the Senate could check "people's momentary errors" through deliberation and long-term thinking.

The Bill of Rights protects fundamental liberties **even if a fearful majority would abandon them**. These mechanisms maintain freedom in **good times and bad**—when citizens are vigilant and when they aren't. The Founders emphasized civic education, with Adams noting, "Liberty cannot be preserved without general knowledge among the people."

**The American system requires active public participation in safeguarding the republic**. Citizens must vote, question leaders, and reject blind loyalty to individuals or parties. Franklin's warning that government ends in despotism when people become corrupted implies _each generation must earn its republic anew_.

Modern Americans face this responsibility. Becoming polarized, apathetic, or fearful would repeat past republics' failures. Our Constitution provides the framework, but **depends on the people** to enforce it. America's democratic culture, while stronger than 1930s Germany or 1910s China, requires constant civic engagement and prioritizing country over party. Throughout our history, public courage—from voting out corrupt officials to exposing wrongdoing—shows a republic that has, thus far, chosen vigilance over complacence.

## How the U.S. System Was Designed to Endure

The examples of Weimar Germany and early Republican China underscore why the U.S. Founders were almost obsessive about **checks and balances**. They knew from their study of history (including the ancient Roman Republic's fall and the English Civil War) that giving any individual or group unchecked power was a recipe for disaster.

The Constitution's **separation of powers** and **federalism** (splitting authority between state and federal governments) create multiple barriers to tyranny. As Madison noted, in the “compound republic of America,” power is divided both horizontally and vertically - a “double security” for the rights of the people. If an authoritarian movement were to capture one center of power, the others could resist.

For instance, even if the executive branch veers toward autocracy, Congress can block its agenda, states can refuse unconstitutional directives, and courts can invalidate illegal orders. Conversely, congressional demagogues face presidential vetoes and judicial review. This system of **co-equal rivals, forced to cooperate but empowered to check each other** was deliberately designed.

The American system also includes crucial safety valves absent in failed republics. **Regular, free elections** provide peaceful means to remove power-abusing leaders. **Freedom of speech** enables critics to raise alarms against authoritarian behavior before it's too late. An **independent judiciary** upholds rule of law against authoritarian impulses. And our constitutional culture ensures that even ambitious politicians typically justify actions in legal terms rather than dismissing constitutional constraints outright.

This culture serves as a vital safeguard: when President Nixon trampled legal boundaries during Watergate, even members of his own party chose Constitution over cult of personality, forcing his resignation rather than allowing the rule of law to crumble. Today's far deeper partisan divisions demand even greater moral courage - we urgently need leaders willing to risk political exile by standing against their own faction when constitutional principles are at stake, before our democratic guardrails collapse entirely.

## Lessons for Today

These collapsed democracies aren't distant curiosities - they are **urgent warnings**. The toxic combination that destroyed previous republics: social division, extreme polarization, charismatic demagogues, cowed legislatures, and citizens trading freedom for false security - they already casts shadows across our own landscape.

America has weathered democratic crises before and faces profound challenges now. Our survival depends entirely on whether our institutions and civic culture possess the resilience to withstand mounting pressures. The constitutional architecture the Founders designed remains brilliant, but like any structure, it deteriorates without constant maintenance, and answering hard questions:

* When an executive grasps for unconstitutional power, will Congress defend its authority with unwavering resolve—even against a president of their own party? Or will they, like the Reichstag, sacrifice democratic principles on the altar of partisan loyalty?

* When confronted with genuine national crisis, will Americans recommit to constitutional principles, or will we surrender to the seductive promise of a strongman who pledges to "do whatever it takes"?

* When courts face overwhelming pressure to validate unconstitutional actions in the name of "national security" or "emergency powers," will they stand as the Youngstown Court did, or capitulate like Weimar's judges?

Our judiciary's independence remains democracy's last line of defense.

We've resisted authoritarian temptations before: Washington rejected kingship by establishing the peaceful transfer of power; our military has maintained steadfast civilian subordination; and even after 9/11's trauma, we maintained public debate over security measures and implemented sunset provisions on emergency powers.

Today's fierce conflicts over executive authority, congressional oversight, and democratic norms aren't signs of failure - they're **vital signs** of a republic still fighting for its principles. A dying democracy wouldn't bother with such debates; it would slip quietly into autocracy's embrace.

And today, maybe more than ever, Washington's warning against the 'spirit of party' remains relevant:

> The alternate domination of one faction over another, sharpened by the spirit of revenge natural to party dissension...is itself a frightful despotism. But this leads at length to a more formal and permanent despotism.

The choice before us is stark: vigilant citizenship or democratic collapse. History awaits our answer.

**Sources and further reading:**

1. Madison, James. _Federalist No. 47_ (1788) - Warning against consolidation of power ([Federalist 47, Federalist 48, and Federalist 51 | Teaching American History](https://teachingamericanhistory.org/document/federalist-47-federalist-48-and-federalist-51/#:~:text=objection%20is%20founded,the%20federal%20Constitution%2C%20therefore%2C%20really)).

2. Madison, James. _Federalist No. 51_ (1788) - On checks and balances (“Ambition counteract ambition”) ([The Avalon Project : Federalist No 51](https://avalon.law.yale.edu/18th_century/fed51.asp#:~:text=administer%20each%20department%20the%20necessary,internal%20controls%20on%20government%20would)) ([The Avalon Project : Federalist No 51](https://avalon.law.yale.edu/18th_century/fed51.asp#:~:text=were%20to%20govern%20men%2C%20neither,oblige%20it%20to%20control%20itself)).

3. _Weimar Republic and Nazi Germany:_ United States Holocaust Memorial Museum, _Hitler's Rise to Power_ - on Hitler using legal means to destroy democracy ( [Hitler Comes to Power: How, When, & Key Dates | Holocaust Encyclopedia](https://encyclopedia.ushmm.org/content/en/article/hitler-comes-to-power#:~:text=In%20January%201933%2C%20Hitler%20did,democracy%20and%20create%20a%20dictatorship) ); Wikipedia, _Enabling Act of 1933_ - law giving Hitler dictatorial powers ([Enabling Act of 1933 - Wikipedia](https://en.wikipedia.org/wiki/Enabling_Act_of_1933#:~:text=Gesetz%20zur%20Behebung%20der%20Not,and%20balances%20in%20the%20constitution)) ([Enabling Act of 1933 - Wikipedia](https://en.wikipedia.org/wiki/Enabling_Act_of_1933#:~:text=The%20election%20was%20a%20setback,With%20the%20Enabling%20Act%20in)); USHMM Holocaust Encyclopedia - Nazi campaign promises of authoritarian government ( [Hitler Comes to Power: How, When, & Key Dates | Holocaust Encyclopedia](https://encyclopedia.ushmm.org/content/en/article/hitler-comes-to-power#:~:text=During%20election%20campaigns%2C%20Adolf%20Hitler,The%20Nazis%20promised%20to) ).

4. _Republic of China 1912-1916:_ Britannica, _Yuan Shikai_ - Yuan's undermining of parliament and self-crowning as Emperor ([Yuan Shikai | Chinese President & Warlord | Britannica](https://www.britannica.com/biography/Yuan-Shikai#:~:text=war%20lords%3B%20a%20permanent%20constitution,for%20life%20and%20then%20boldly)) ([Yuan Shikai | Chinese President & Warlord | Britannica](https://www.britannica.com/biography/Yuan-Shikai#:~:text=thus%20bringing%20about%20a%20revolt,forced%20to%20abolish%20the%20newly)); British Journal of Chinese Studies - details of Yuan dissolving Parliament in 1914 ( [A Forgotten Experiment | British Journal of Chinese Studies](https://bjocs.site/index.php/bjocs/article/view/54#:~:text=pro,a%20democratic%20transition%20utterly%20failed) ) and support from elites for his crackdown ( [A Forgotten Experiment | British Journal of Chinese Studies](https://bjocs.site/index.php/bjocs/article/view/54#:~:text=these%20rebels%20Yuan%20ordered%20the,cited%20in%20Shan%2C%202018%3A%20177) ).

5. Franklin, Benjamin. Quote and Constitutional Convention notes (Sept 17, 1787) - _“A republic, if you can keep it”_ ([September 17, 1787: A Republic, If You Can Keep It (U.S. National Park Service)](https://www.nps.gov/articles/000/constitutionalconvention-september17.htm#:~:text=,%E2%80%9D)) and warning that only a virtuous people can sustain free government ([September 17, 1787: A Republic, If You Can Keep It (U.S. National Park Service)](https://www.nps.gov/articles/000/constitutionalconvention-september17.htm#:~:text=they%20are%20such%3B%20because%20I,%E2%80%9D)).

---

Title: 2024 in Books

Subtitle: Another year of trying to read more

Description: A look back at my year in reading through 50 books and nearly 17,000 pages, featuring recommendations across fiction, non-fiction, and everything in between.

Date: 2/2/2025

Source: boleary.dev

Tags: Learning

import Callout from "../../../../components/Callout.astro";

# 2024 in Books

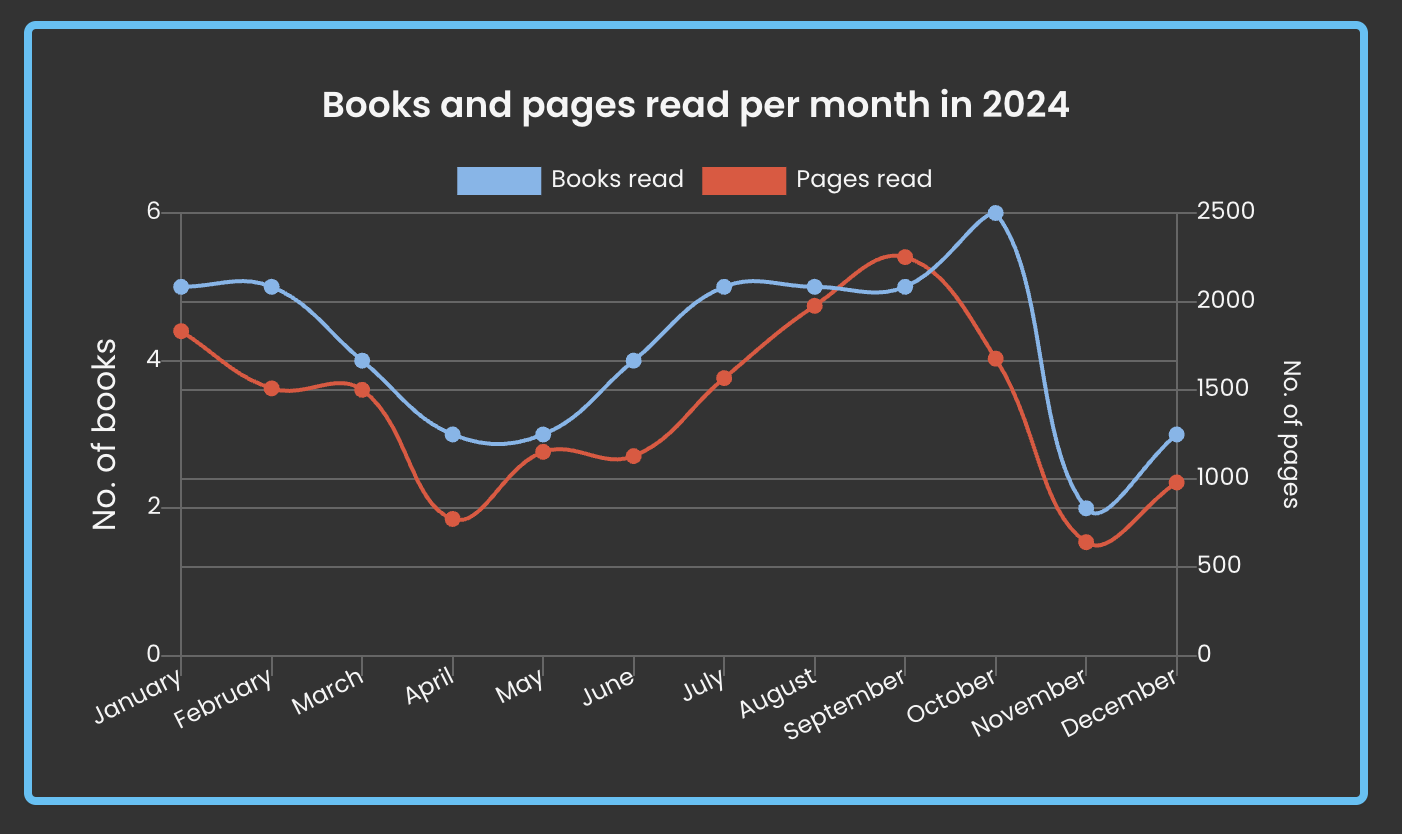

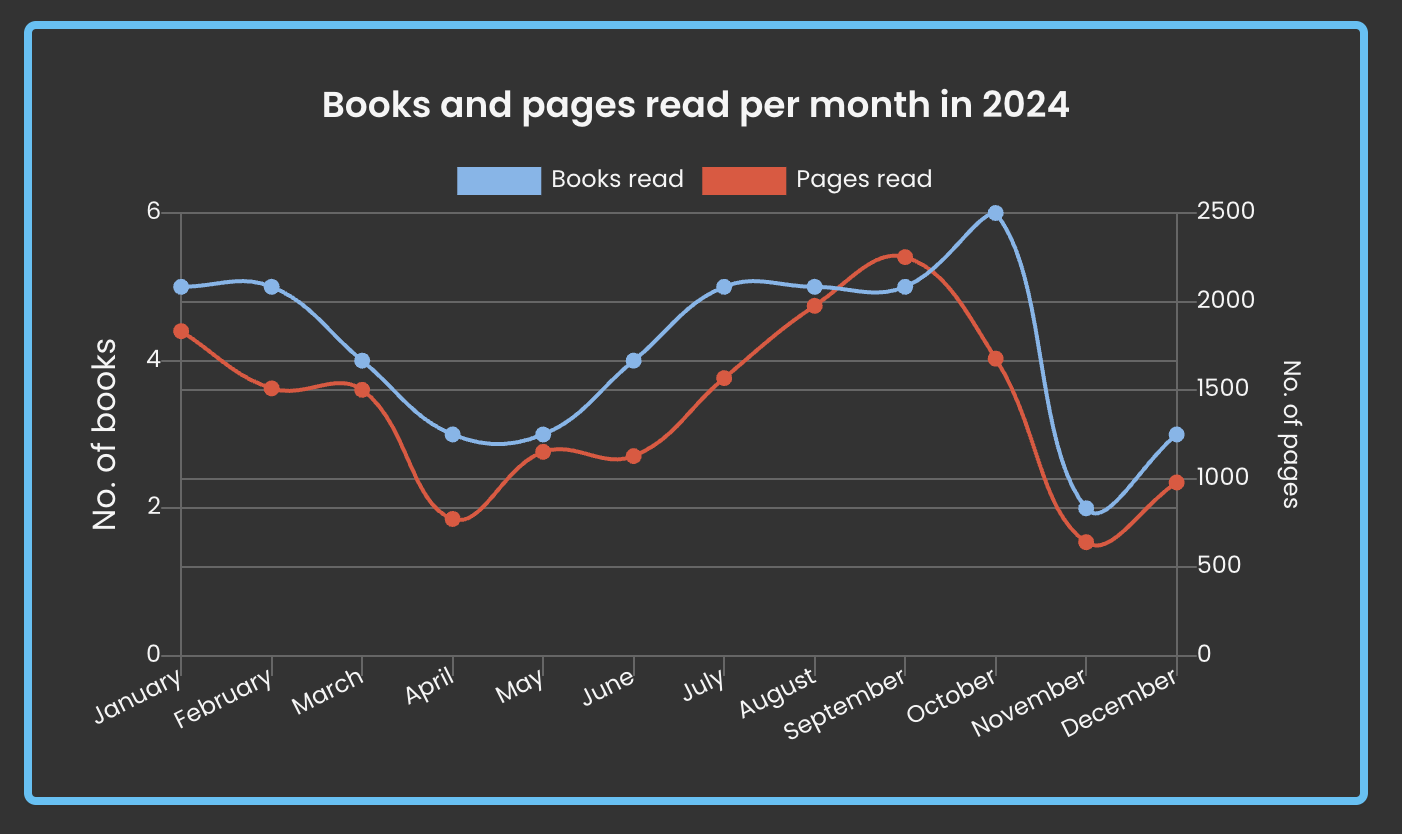

Like many years before, I aimed to be an avid reader in 2024 - and this time I actually succeeded! I finished 50 books across nearly 17,000 pages this year. Looking at my [StoryGraph](https://app.thestorygraph.com/) stats, it's clear that my reading habits continue to evolve and grow.

## Statistics

A few interesting breakdowns from my reading year, courtesy of The StoryGraph:

- Read 50 books total (up 6% from 2023!)

- The pace was split pretty evenly between medium (56%) and fast (44%)

- September was my most prolific month with 2,251 pages read

- July brought my highest average ratings at 5.0

- I explored works from 37 new authors this year, including David Grann, Michelle McNamara, and Bee Wilson

- 14 of the books were part of series

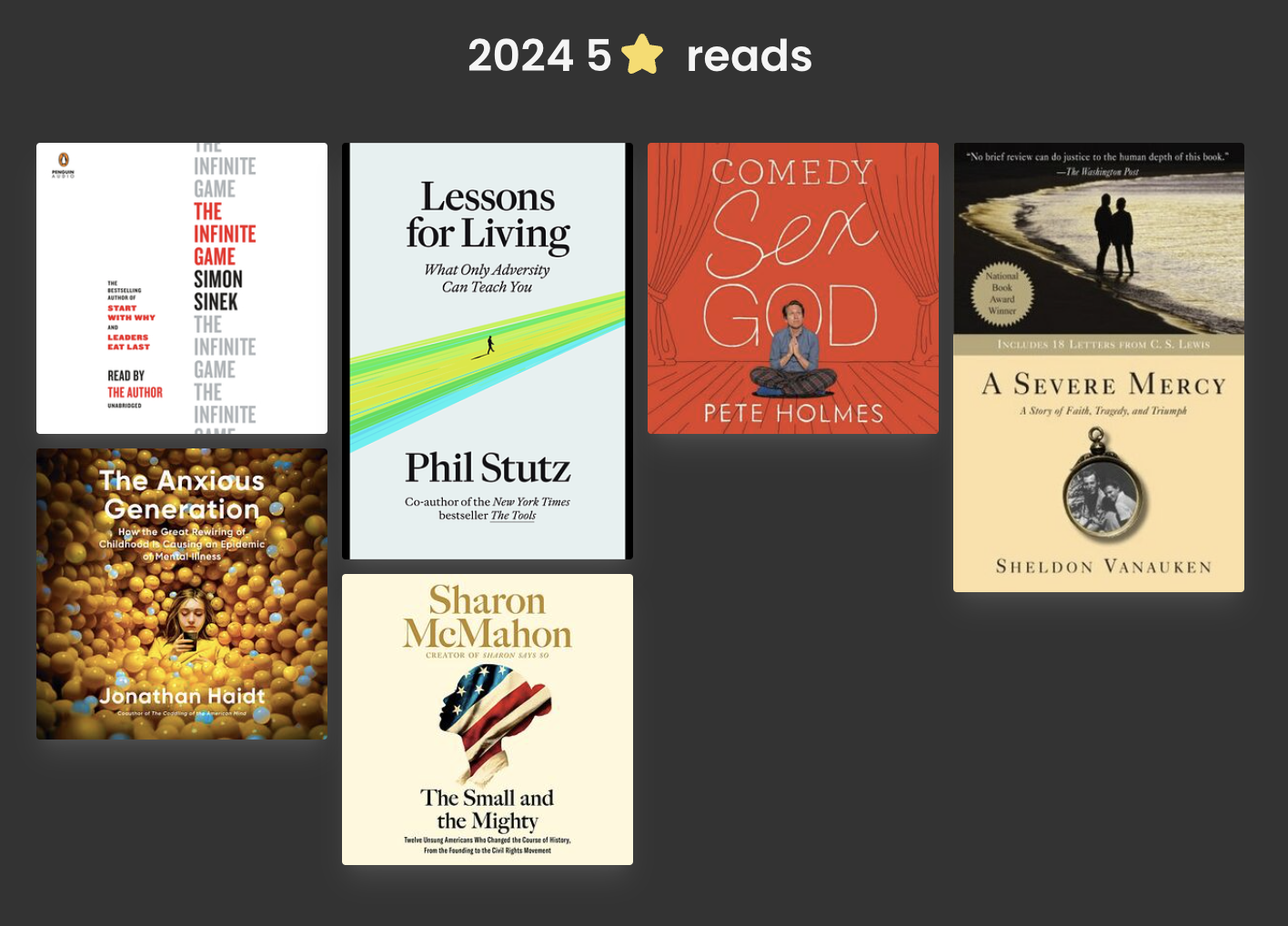

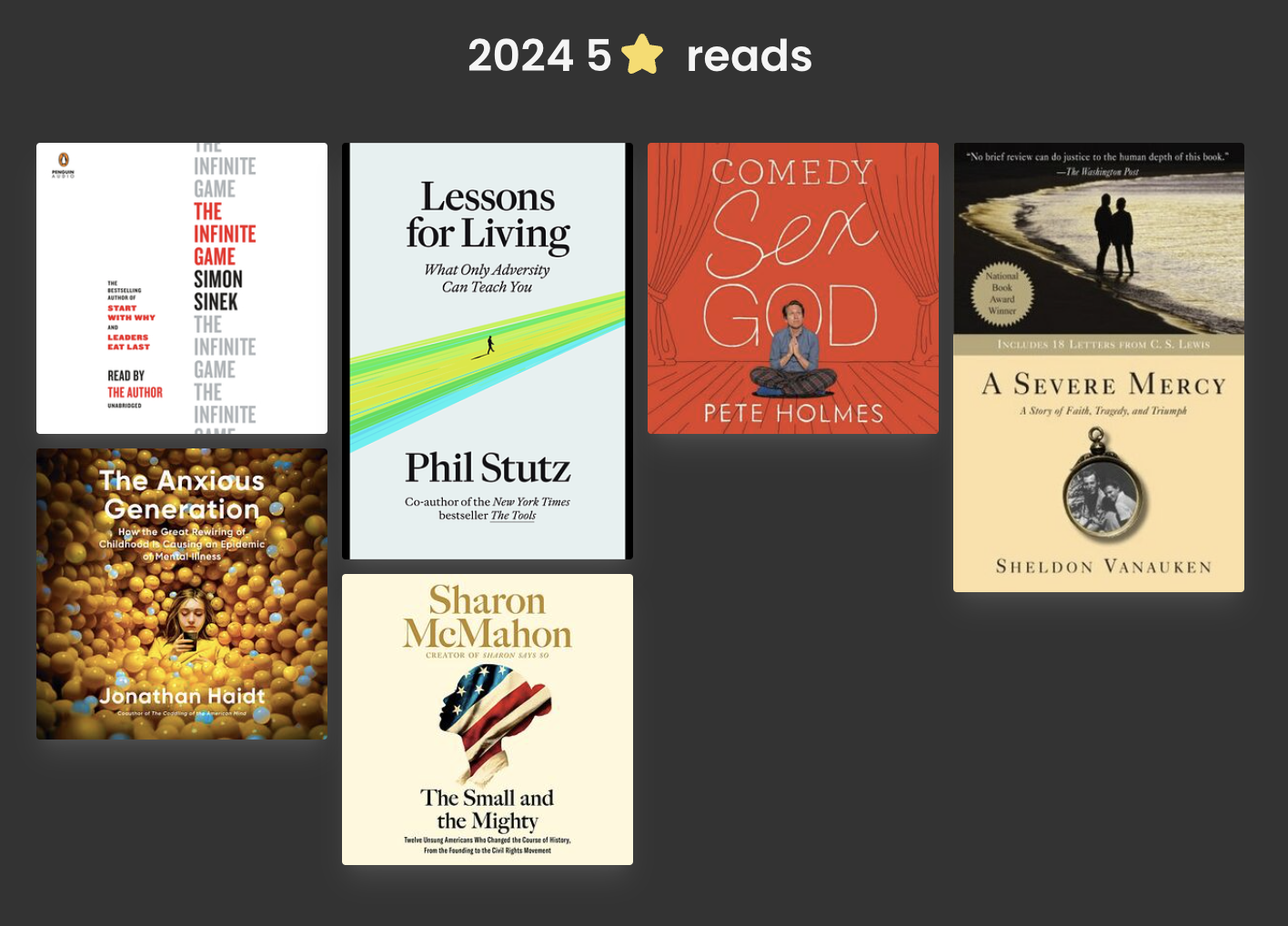

## Best of the Best

### Best Fiction

[**All the Light We Cannot See**](https://amzn.to/3Eh2J58) is a historical novel that follows a blind French girl and a German boy whose lives intersect amidst the devastation of World War II. It emerged as my favorite fiction read of the year. The interweaving narratives and masterful prose created an unforgettable reading experience that stayed with me long after turning the final page.

### Best Nonfiction

[**The Anxious Generation**](https://amzn.to/3Ei0JcC): *How the Great Rewiring of Childhood Is Causing an Epidemic of Mental Illness* stood out as the most impactful nonfiction book I encountered this year. Its insights into modern challenges and practical wisdom make it essential reading for understanding our current moment. It has been widely (and rightfully) praised as a transformative work that courageously confronts the emotional undercurrents of our time.

## Must Reads

Here's a curated selection that I think deserves special attention:

### Must Read for Parents

[**The Anxious Generation**](https://amzn.to/4aMdxEy) provides crucial insights for anyone raising children in today's complex world. While potentially uncomfortable at times, it offers practical frameworks for supporting the next generation. It's a must-read for anyone who wants to understand the emotional challenges of raising children in today's world, and it lives up to the hype you've probably heard about it.

### Must Read for Spiritual Seekers

[**Comedy Sex God**](https://amzn.to/40JPP7j) might seem like an unusual recommendation for spiritual reading, but its unique perspective on faith, meaning, and personal growth makes it a standout choice for anyone exploring their spiritual path.

### Must Read for Americans

[**The Small and the Mighty**](https://amzn.to/4gp0Ss0) offers essential stories that reshape our understanding of American history through the lens of overlooked change-makers. It's the kind of book that makes you question what else you might not know about our shared history.

## Highly Recommended

While there weren't many books I truly disliked this year, several stand out as particularly worthy of recommendation:

[**C.S. Lewis's Short Stories**](https://amzn.to/3CIgtoP)

Even as someone who has read much of Lewis's work, these stories surprise me. They showcase his mastery of narrative while carrying the same philosophical weight we expect from his other works.

[**Magic Pill**](https://amzn.to/3WFcksL)

This book flips our assumptions about health and wellness on their head. It strikes a balance between being scientifically sound and profoundly human.

[**Turn the Ship Around**](https://amzn.to/4jGKqWX)

Having witnessed my fair share of organizational shifts, I found this book hitting home. It’s not your run-of-the-mill business book—it's a guide to leading meaningful change while holding onto what truly matters.

[**The Invisible Life of Addie LaRue**](https://amzn.to/3CHihyo)

Perhaps the most inventive premise I encountered this year. The story's meditation on memory, legacy, and what makes a life meaningful is haunting in the best possible way. The fact that 568,701 other StoryGraph users shelved this book suggests I'm not alone in my appreciation.

[**The Three-Body Problem**](https://amzn.to/3WJrX26)

Science fiction at its finest - thought-provoking, expansive, and deeply original. While the concepts can be challenging, the payoff is more than worth the mental investment.

[**Killers of the Flower Moon**](https://amzn.to/3WJrYTI)

Reading this before watching the film adaptation proved to be the right choice. David Grann's meticulous research and compelling narrative style bring this tragic piece of American history to life in a way that demands attention.

[**Finders Keepers**](https://amzn.to/3WIrOMs)

A thriller that manages to be both a page-turner and a meditation on the nature of storytelling itself. It's rare to find a book that succeeds both as entertainment and as literary commentary.

## What's Next?

Looking ahead to 2025, I'm already building my to-read list. The stats show I had a good balance of fiction and nonfiction this year, but I might try to push myself into some new genres. According to StoryGraph, my highest-rated reads tended to be reflective, informative, and adventurous with a slower pace - something to keep in mind as I choose my next books.

I'm particularly interested in exploring more works from the authors I discovered this year. Among the 37 new-to-me authors, several have extensive backlists I'm eager to dive into.

💡 A huge "thank you" to [The StoryGraph](https://app.thestorygraph.com/) - an independent alternative to Goodreads from [Nadia Odunayo](https://nadiaodunayo.com/) that helps me track all of these great books and statistics.

Remember, these are just my personal picks based on my own journey with these books. I'm really curious to know what you read in 2024 and what's on your radar for 2025—drop your suggestions in the comments below!

## All Books

# Books Read in 2024

## January

- *The Paris Apartment* by Lucy Foley

- *The Infinite Game* by Simon Sinek ⭐⭐⭐⭐⭐

- *Killers of the Flower Moon* by David Grann ⭐⭐⭐⭐

- *Dune Messiah* by Frank Herbert

- *Lessons for Living* by Phil Stutz ⭐⭐⭐⭐⭐

## February

- *All the Light We Cannot See* by Anthony Doerr ⭐⭐⭐⭐

- *Lessons in Chemistry* by Bonnie Garmus ⭐⭐⭐

- *Originals: How Non-Conformists Move the World* by Adam M. Grant

- *Day by Day with Saint Joseph* by Ken Lasch, Joseph Champlin

- *Finders Keepers* by Stephen King ⭐⭐⭐⭐½

## March

- *End of Watch* by Stephen King

- *Leaders Eat Last* by Simon Sinek ⭐⭐⭐½

- *Sex Talks* by Xander Marin, Vanessa Marin

- *Radical Candor* by Kim Malone Scott

## April

- *The Lincoln Lawyer* by Michael Connelly

- *The Brass Verdict* by Michael Connelly ⭐⭐⭐⭐

- *The Art of Writing and the Gifts of Writers* by C.S. Lewis

## May

- *I'll Be Gone in the Dark* by Michelle McNamara ⭐⭐⭐⭐

- *Masters of Greek Thought* by Robert C. Bartlett

- *Dust* by Hugh Howey ⭐⭐⭐⭐½

## June

- *The Three-Body Problem* by Cixin Liu ⭐⭐⭐⭐

- *Magic Pill* by Johann Hari ⭐⭐⭐⭐½

- *Short Stories* by C.S. Lewis ⭐⭐⭐⭐½

- *Oath and Honor* by Liz Cheney

## July

- *Last Boat Out of Shanghai* by Helen Zia

- *A Long Walk to Water* by Linda Sue Park

- *The Dark Forest* by Cixin Liu

- *Nuclear War: A Scenario* by Annie Jacobsen

- *Comedy Sex God* ⭐⭐⭐⭐⭐

## August

- *1923: The Crisis of German Democracy* by Mark William Jones

- *The Invisible Life of Addie LaRue* by V.E. Schwab ⭐⭐⭐⭐¾

- *Where the Mountain Meets the Moon* by Grace Lin

- *Out of the Silent Planet* by C.S. Lewis ⭐⭐⭐⭐

- *After Steve* by Tripp Mickle

## September

- *Fight Club* by Chuck Palahniuk ⭐⭐⭐¾

- *The Giver of Stars* by Jojo Moyes ⭐⭐⭐½

- *First Bite: How We Learn to Eat* by Bee Wilson ⭐⭐⭐

- *The Woman in the Window* by A.J. Finn ⭐⭐⭐¾

- *Death's End* by Cixin Liu ⭐⭐⭐½

## October

- *Perelandra* by C.S. Lewis

- *Turn the Ship Around!* by L. David Marquet ⭐⭐⭐⭐½

- *The Power of Myth* by Bill Moyers, Joseph Campbell ⭐⭐⭐⭐

- *If We Break* by Kathleen Buhle

- *A Severe Mercy* by Sheldon Vanauken ⭐⭐⭐⭐⭐

- *The Deadline: Essays* by Jill Lepore

## November

- *The Hitchhiker's Guide to the Galaxy* by Douglas Adams

- *Creativity, Inc.* by Ed Catmull, Amy Wallace

## December

- *Restaurant at the End of the Universe* by Douglas Adams

- *The Anxious Generation* by Jonathan Haidt ⭐⭐⭐⭐⭐

- *The Small and the Mighty* by Sharon McMahon ⭐⭐⭐⭐⭐

---

Title: Americans found their X (not Twitter)

Subtitle: What Xiaohongshu can teach us about international relations

Description: In a delightfully ironic twist, the U.S. government's TikTok ban has pushed Americans toward Xiaohongshu (a more explicitly Chinese government-controlled app), highlighting the growing disconnect between how governments view the world and how their citizens actually live and connect in it.

Date: 1/15/2025

Source: boleary.dev

Tags: Tech

import Callout from "../../../../components/Callout.astro";

There's something delightfully ironic about the U.S. government's TikTok ban pushing Americans toward Xiaohongshu - translated as "Little Red Book" but starting to be called "Red Note" in the US, an app that is more explicitly controlled by the Chinese government than TikTok ever was. The memes that have come out of this irony are top tier.

But beyond the irony lies a profound lesson about the growing disconnect between how governments view the world and how their citizens actually live in it.

## The Disconnect

The government's reasoning for banning TikTok centers on concerns about data collection and foreign influence. And while these aren't invalid concerns in theory, the reality on the ground tells a different story. In the same month that Congress focused on banning an app primarily used for dance videos and cat memes, we've seen [unprecedented drone activity over critical infrastructure in the Northeast](https://wapo.st/42b0PwI) and learned about what's being called ["the worst telecom hack in our nation's history"](https://wapo.st/40x9sAv) - where Chinese hackers gained access to actually listen to phone calls and read text messages. Like...all of them.

This stark contrast between what governments prioritize and what actually threatens security isn't just ironic - it's deeply telling about how disconnected policy decisions have become from reality.

## Beyond Politics: Human Connections in a Digital Age

What's even more fascinating and reenforcing to me on this point is watching what's happening on Xiaohongshu right now.

Note: I'm going to start calling Xiaohongshu "X (not Twitter)" to piss of Elon. I'll also be calling "Twitter (not X)"

Regular Americans and Chinese citizens are coming together, sharing pictures of their everyday lives, helping each other with language practice, and creating shared cultural moments. Americans are captioning their posts in Mandarin while Chinese users create English language tutorials, all while building genuine human (and many many cat) connections that transcend geopolitical tensions.

I've seen this before. In times of conflict, regular people find ways to connect **despite** their governments' stances. The human desire to share and support often overcomes imposed barriers.

When Russia invaded Ukraine, I saw this firsthand: European colleagues offered homes to Ukrainian teammates, and Russian colleagues openly condemned their government, many seeking to leave. These human connections and reactions were profoundly different from the way governments discussed and treated each other.

## Security Theater vs. Real Threats

Let's be clear: while Congress focuses on banning TikTok over theoretical concerns, we have concrete evidence of telecom security breaches that could let foreign actors monitor unencrypted communications. We've also seen unexplained drone activity over sensitive sites—real national security issues needing immediate attention.

Data privacy concerns aren't unique to Chinese companies. For decades, foreign and domestic firms have mishandled user data. Remember Cambridge Analytica? That wasn't a foreign government—it was Facebook allowing data harvesting to manipulate millions of Americans for political gain. Many companies still treat data as a commodity, not something to protect.

If governments truly want to protect privacy and security, they could look to Europe's GDPR. It's not perfect, but it tackles the real issue: protecting citizens' data from misuse by any entity, foreign or domestic. Instead, we get Cold War-era paranoia disguised as policy.

The TikTok ban and migration to X (not Twitter) expose an uncomfortable truth: the government prioritizes domestic business interests over citizen privacy. When Meta or Google lose market share to TikTok, it's a “national security crisis.” But when those same companies mishandle data? Silence.

This isn't just hypocrisy—it's a failure to protect citizens while shielding corporate interests. Americans see through it. The move to X (not Twitter) isn't teenage rebellion; it's a clear signal that people recognize the difference between real security measures and political theater.

## What This Really Tells Us

The migration to X (not Twitter) reveals something fundamental about our increasingly connected world: the traditional model of national governance is struggling to keep pace with how people actually live and connect in the digital age. Governments still operate in a world of clear boundaries and binary choices - us vs. them, secure vs. insecure - while their citizens live in a much more nuanced reality.