Understanding what we don't understand in AI

Why we need more people like Timnit Gebru

"I know enough to be dangerous" is a term I often apply to myself in many technical concepts. Not being a classically trained computer scientist, but a technologist and developer none-the-less I know that (a) I know more than the 'average' person about technology but (b) there are a lot of people that know more than me.

Probably nowhere is this more true than with artificial intelligence and machine learning. There are a LOT of thoughts on the current and future state of this branch of technology and science, but I know that I know enough to be dangerous. One way I can be "dangerous" is I know that we're not looking at a Skynet type scenario anytime soon (Skynet is the AI technology that becomes sentient and seeks to destroy humanity in the movie Terminator). I don't need to look any further than my kids asking Alexa what they think are "simple" questions that I know she has no hope of answering.

At the same time, I know that the technology that exists in the wrong hands is pretty dangerous. Maybe not in the same big-screen way we see in Terminator, but in some ways worse and more insidious. That's one reason I recommend everyone I know to watch the Netflix documentary The Social Dilemma to understand the impact on our social lives on the internet learning algorithms can have. But I also know that there is much more on this topic that I don't know, which is why I was so disheartened to hear of Google's firing of Dr. Timnit Gebru.

Timnit has devoted their professional career to researching and understanding how AI can benefit and detract from society. I'm incredibly sorry that Timnit has to deal with this personally. I'm sure they will continue to have to deal with lawyers and Google and fall out from this incident in their personal and professional life. At the same time, I hope that everyone - inside and outside of technology - can learn from this. I'm glad Google has shown us all quite clearly where they stand on one of the most critical issues of our time - how are we going to use AI/ML ethically and responsibly.

I love technology; it's fantastic. It can positively impact humans. Technological advances in medicine, agriculture, and even the industrial revolution have solved major problems that have faced humankind. I believe technology has objectively made life more comfortable, safer, and better. The wild application of technology without either (a) understanding or (b) desire to act on the ethical consequences has also shown us the other side of this coin time and time again.

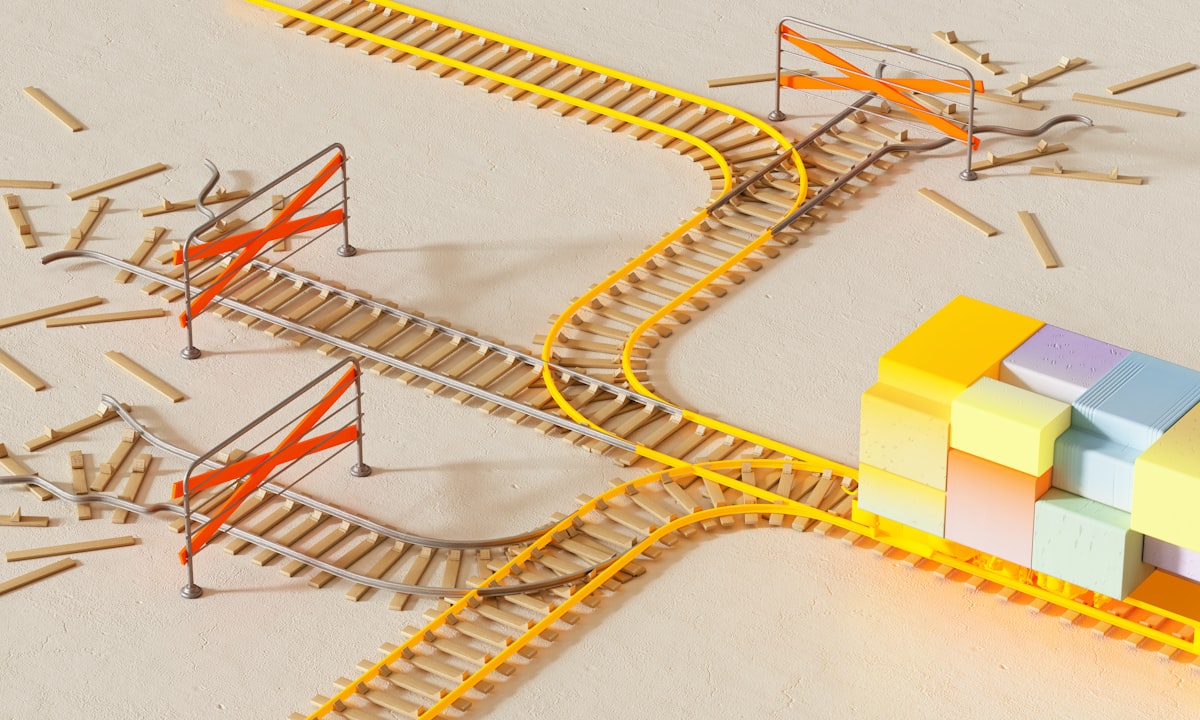

Climate change, global surveillance, you name it. Much like everything in life, there is potential for good and evil in new technology. And if you're not careful, if you're not intentional, you can cause a lot of harm while also changing things for the better. And if we haven't learned that lesson that history has been trying to teach us, we'll keep repeating the same mistakes. AI/ML is at a critical point in its life - we can choose to let it grow unbounded, and eventually, we KNOW that will have significant negative consequences.

And what's even worse? We KNOW those consequences will disproportionally impact folks who have been traditionally marginalized or are in the minority. Those of us that understand even a little about AI/ML comprehend that it has a unique ability to be particularly bad at this.

But that is where my comprehension ends. That is why it would be dangerous for me to go further. And that is EXACTLY why folks like Timnit, who understand the technology way better than I do, and thus WAY better than the average person does, are so critical. And if large tech companies who can put their finger on one side or the other of this scale we've established choose not to allow dissenting opinions, ethical questioning, and solid scientific research in the room...we've already lost.

That's when things get truly dangerous.

Comments ()